Open Source, SaaS, and the Silence After Unlimited Code Generation

The End of Feedback

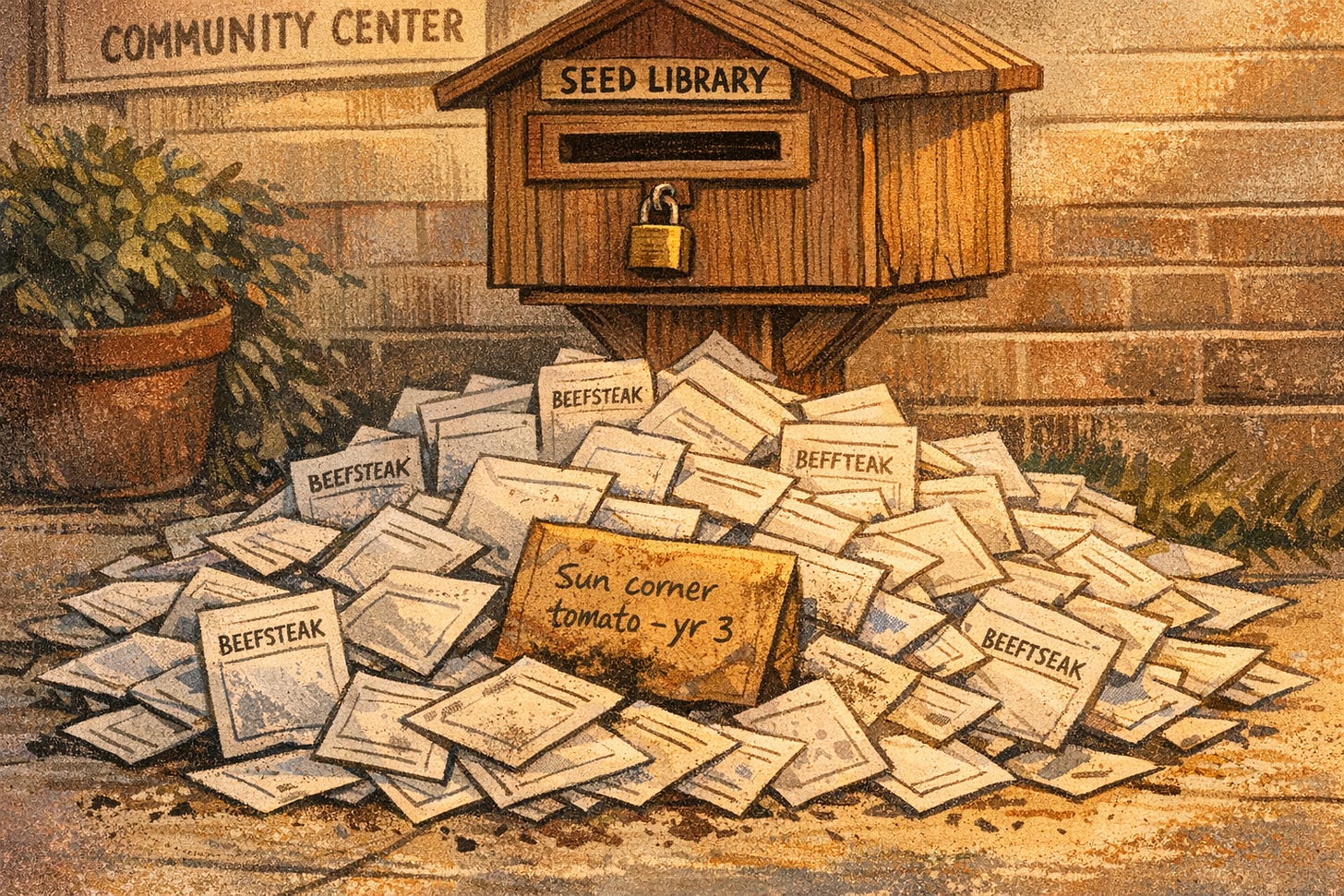

There’s a seed library at the community center near my house. Or there was. The way it worked: you’d take a packet of tomato seeds, grow your tomatoes, save some seeds from your best plants, and bring them back.

Then last year, something changed. People started dropping off bags and bags of seeds. They all got those new bulk seed generators that had gotten cheap enough for anyone to use. Hundreds of seed packets at a time, all labeled perfectly, all sorted into neat little envelopes. They looked great. But half of them wouldn’t germinate. Some were mislabeled, you’d plant what said “beefsteak” and get nothing, or worse, get something that choked out everything around it. A few were just empty envelopes with very convincing labels.

The librarian spent her whole spring sorting through the avalanche, trying to separate the real contributions from the junk. She couldn’t keep up. Every morning there’d be a new pile on the doorstep. So one day she just locked the drop-off box.

After that, two things happened. The flood stopped, obviously. But so did everything else. The people who’d been quietly bringing back their one envelope of weird, wonderful, sun-adapted seeds? They stopped coming too. Oh, they were still growing things they just didn’t need the library anymore. The same tools that made it easy to flood the box with junk made it easy to grow whatever you wanted at home.

“The gardens have never been better,” the librarian told me. “I see them everywhere. I just can’t see what’s in them anymore.”

Drowning

So here’s what’s happening. Today.

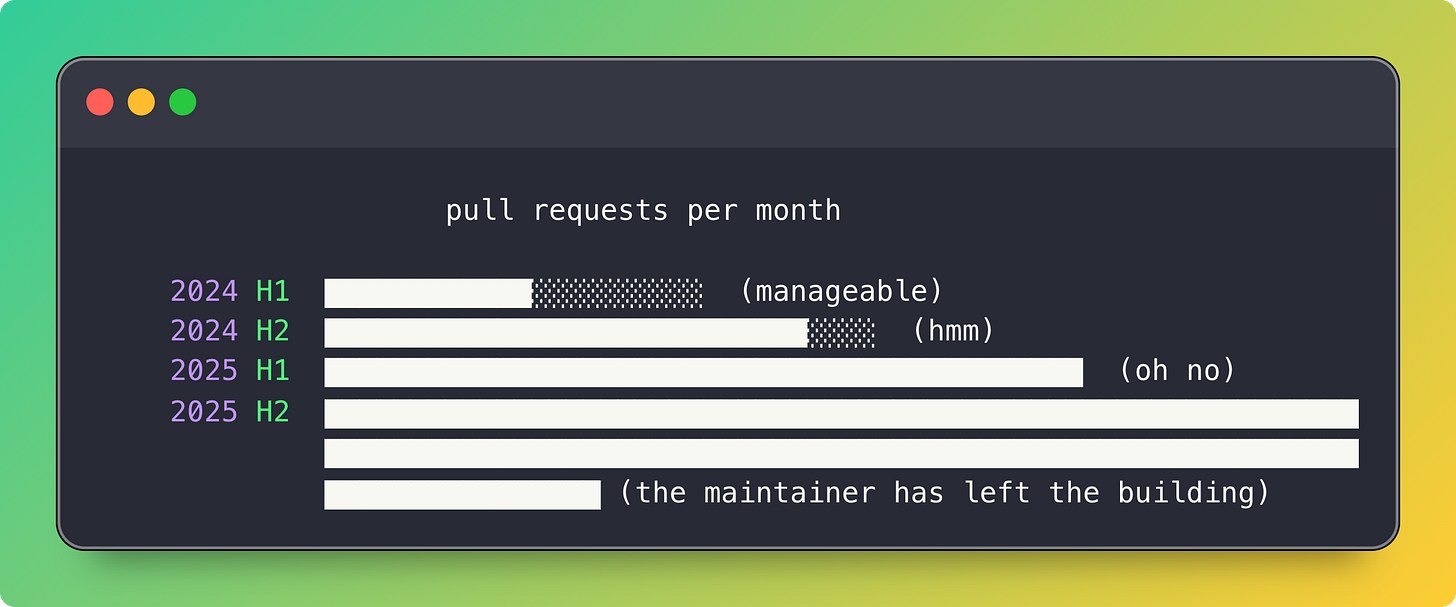

Open source maintainers are drowning in AI-generated pull requests. And not the good kind of drowning. Steve Ruiz at tldraw described getting PRs that looked incredible. Formally correct, tests passing, beautiful commit messages... and then he started noticing some patterns. Authors ignoring the PR template. Large PRs abandoned the moment someone asked a question. Commits spaced too close together, like someone hit a button and went to make coffee.

Because someone hit a button and went to make coffee.

Daniel Stenberg shut down cURL’s bug bounty after AI submissions hit 20% and the valid rate dropped to 5%. Mitchell Hashimoto banned AI-generated code from Ghostty almost entirely. Tldraw now auto-closes all external pull requests.

The flood of bad PRs? That’s just the surface problem. What about what happens after the door closes?

Quiet

People stop knocking.

I don’t mean the bot army. I mean real people, with real needs, who would have been contributors in another timeline. The ones you actually want contribution from. They’re not submitting PRs anymore. They’re not filing issues. They’re not even complaining in Discord. They’re just... forking and moving on.

I even did this myself. I forked a code image library for this newsletter. Added what I wanted. Changed what I didn’t like. I have a bunch of friends who have done the same thing with other projects: forked, customized, kept going. None of us pushed changes back upstream. We’re not opposed to it, but... well, do the math:

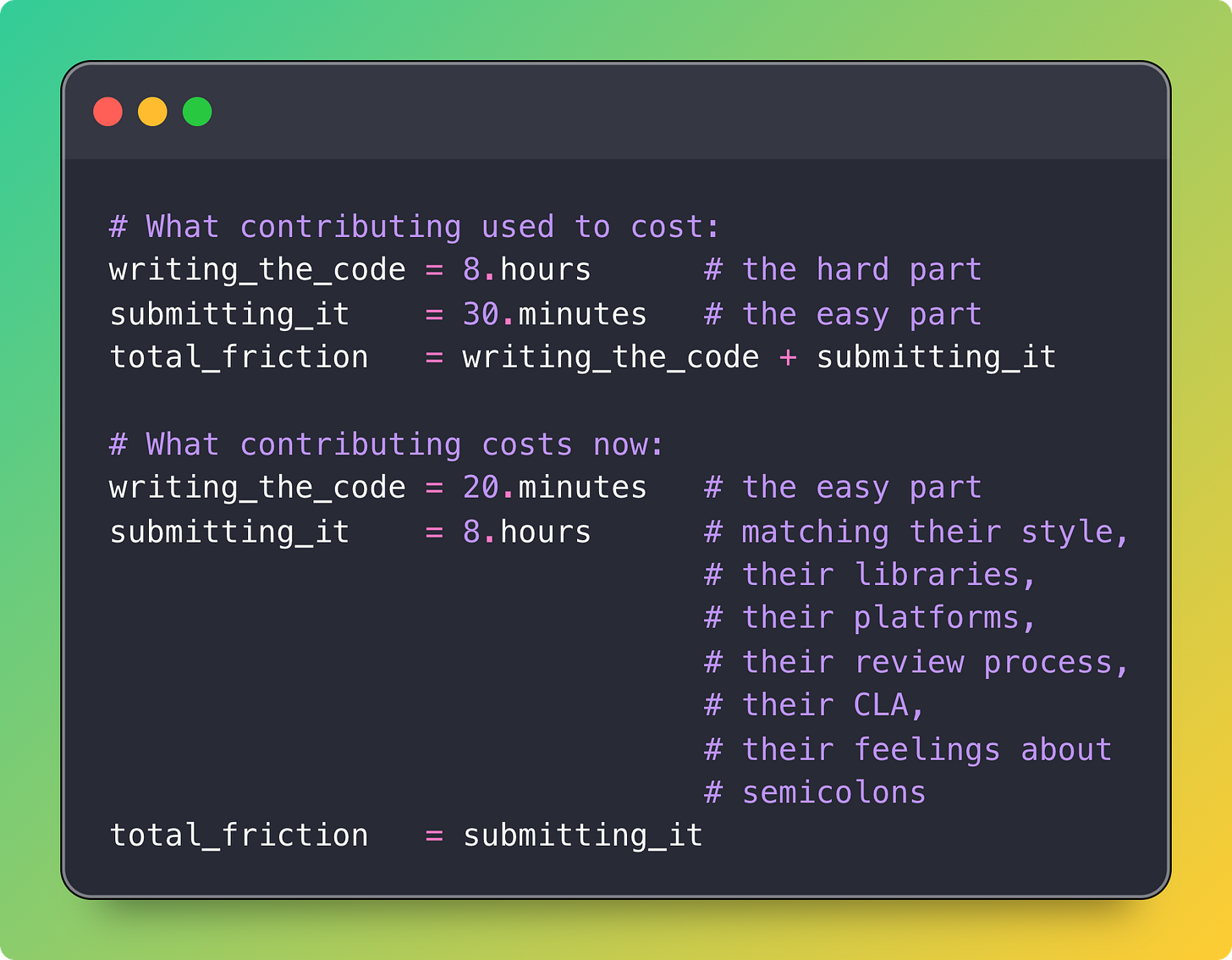

The economics flipped. It used to be expensive to write the code and cheap to submit it. Now it’s cheap to write the code and expensive to get it merged. The cost of self-sufficiency dropped below the cost of communication.

And that caring, that friction of contributing that was the magic of open source. User becomes contributor becomes maintainer. Someone scratches an itch, sends a patch, learns something, sticks around. That loop only works when participating in it is easier than not participating. Or if contributions are even welcomed in the first place.

$1,100

Look at what’s going on with Cloudflare and Next.js.

Next.js is the most popular React framework. Millions of developers. Vercel spent years building it, writing meticulous documentation, crafting comprehensive tests. They made their software legible, well-documented, well-tested.

On February 13th, a Cloudflare engineering manager sat down with Claude and started rebuilding it.

By the next afternoon, 10 out of 11 routes in Next.js’s own demo app were rendering. By day three, complete applications were shipping to Cloudflare’s infrastructure. By the end of the week: 94% API surface coverage. 1,700 Vitest tests. 380 Playwright E2E tests. Builds 4.4x faster. Bundles 57% smaller. They called it Vinext.

Total cost: approximately $1,100 in API tokens.

They used Next.js’s own test suite as the guide. All those years Vercel spent writing careful, comprehensive tests? They became the blueprint for their own replacement. The documentation that made Next.js a joy to use made it a joy to clone.

(It’s like spending years writing the world’s most detailed diary and then discovering someone used it to become you, but slightly faster and running on different infrastructure.)

Tldraw saw this happen and Steve Ruiz filed an issue to move their 327 test files to a closed source repo. Meticulously scoped. Detailed migration plan. The whole community took it completely seriously. Blog posts were written, Hacker News threads spawned, people started debating whether SQLite had been right all along to keep their 92 million lines of tests private.

It was a joke. (Probably. I think? The line between satire and strategy is getting very thin lately.) They also filed one to translate their source code to Traditional Chinese to slow down AI agents, which is... probably also just as futile. The tests are already in git history. And more importantly, an AI doesn’t your tests. Show it the public API, the documentation, a few examples, and it writes its own. Different from yours, but accomplishing the same thing.

It’s almost as if, if you don’t want people cloning your software... you can’t publish it at all. Let alone open source it.

What do you even do with that?

Feedback

This is bigger than just open source, though. I think this is about feedback. All feedback. The entire concept of a feedback loop between a maker and the people who use what they make.

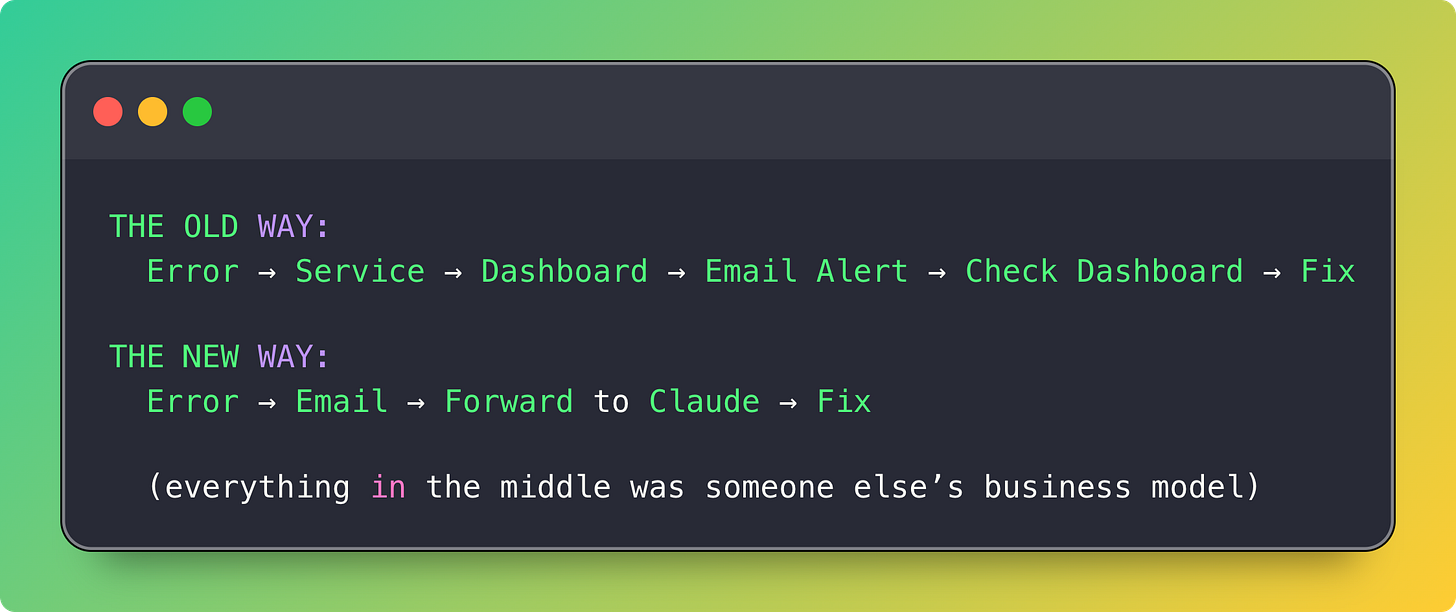

I was building a new app the other day. Early stages, but needed basic error tracking. The old me (like, six months ago me) would have evaluated three or four services, signed up for a freemium tier, integrated their SDK, configured alert rules, maybe eventually paid $20/month when I hit the free tier limits.

Instead I told Claude: “Build me a minimal error tracker that emails me when something breaks.”

Twenty minutes later it worked. It catches errors via Rails.error.subscribe, kicks off a background job, and sends me an email with all the relevant details. It doesn’t have dashboards or trend analysis or any of the hundred features that a real error tracking service would give me. But it emails me when something breaks, and then I forward that email to Claude to fix the problem, and that’s all I actually need right now. I didn’t have to create an account or agree to a terms of service or give anyone my credit card or receive a single onboarding email.

My first instinct was to open source it. Then I thought: why? It’s fifty lines of code that are deeply specific to my setup. Nobody wants my version. What they want is their version. So here, have a prompt instead:

Hey Claude, I’m building an MVP Rails 8 app using Solid and I need something to keep track of and triage errors. Let’s not introduce any external dependencies yet, can you create some code for us in /lib that uses Rails.error.subscribe to catch errors and then kick off a job to email all the relevant error information to my admin email?

That’s almost exactly what I used. Customize it to your setup. Maybe you want errors stored in a database. Maybe you want them sent to your Discord. Maybe you want deduplication. Maybe you want them shipped directly to your OpenClaw on Telegram so the loop closes itself entirely and you only hear about it after it’s already been fixed. I don’t know.

That error tracking SaaS I didn’t sign up for? They’ll never hear from me. Their product is probably great. But I’m not a lost customer or a churned customer or a lead that didn’t convert. I’m someone who would have been a customer in a world where building my own version took more than twenty minutes.

I wonder how many of us there are now. How many ghost users, for how many products, building their own versions of things that already exist, perfectly well, behind a sign-up page they’ll never visit. The moat was supposed to be the accumulated understanding of what goes wrong, years of edge cases, the stuff you can’t get from a prompt. But for my needs, right now, the prompt was enough.

Is this just an MVP thing? Do I grow out of it and eventually pay for the real service? Maybe. But maybe I just keep telling Claude to add features to my fifty lines of code and it becomes a hundred lines and then two hundred and at some point it’s not worse than the SaaS, it’s just different, and it’s mine, and the SaaS never finds out I existed.

Both feel equally plausible, but there’s something about this that reminds me of that book that talks about how dangerous it is to ignore the bottom of the market all of a sudden getting their needs met somewhere else.

Antisocial Coding, or Just Differently Social?

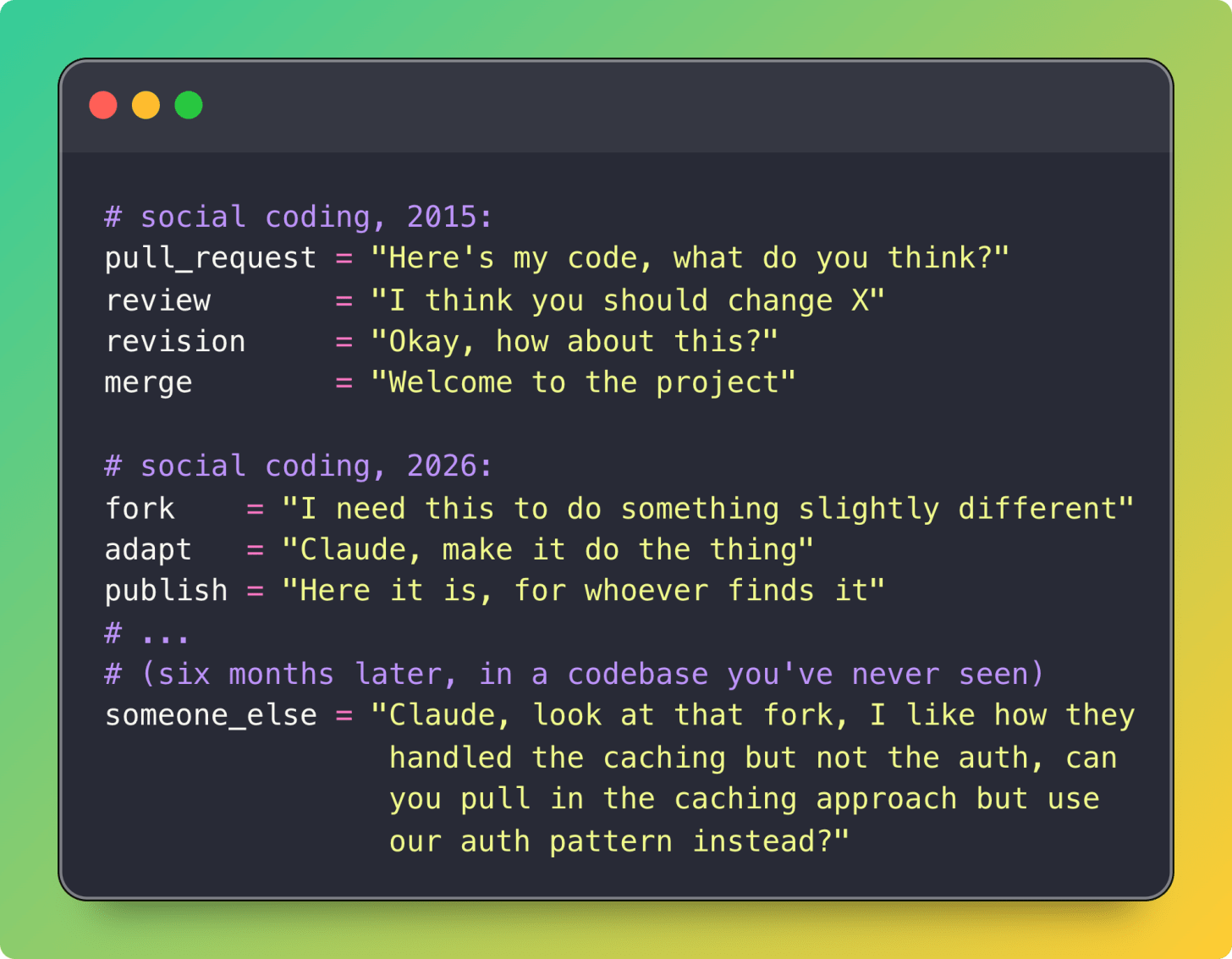

Justin Searls has also been thinking about this and published a post a few days ago called “Agents are ushering in the Antisocial Coding era.” He frames what’s happening as the end of GitHub’s old “Social Coding” promise. If you’ve read this far in the post, clearly I think he’s right about the symptoms.

But I’m not sure the social part actually goes away. I think it changes shape.

Consider what happens when I fork that code image library and add my own features. My friend forks the same library and adds different features. Someone else forks my fork and takes it in a third direction. The original maintainer sees something interesting in one of these forks, points their Claude at it, pulls the idea back in, refracted through their own needs, without either of us ever exchanging a word about it.

That’s still social. It’s just not social in the way we’re used to.

GitHub popularized the PR-and-review model of collaboration. What’s the platform that’s built for the fork-and-reabsorb model? For a world where the most valuable thing is the ability to see what’s happening across a constellation of forks? Where the maintainer’s job is really just to notice what people are doing with their software out in the wild and decide what to pull back in?

I don’t think that platform exists yet, but maybe it needs to?

The Map

I went back to the seed library last week. The librarian had done something I didn’t expect. She’d put up a corkboard on the wall of the whole neighborhood, hand-drawn on butcher paper, and asked people to pin where their garden was and what they’d changed from the original seeds.

She said that a few weeks ago, she’d been walking her dog past a house in the part of town with the heavy clay soil where nothing from the library has ever grown well. And there, in the front yard, was the most ridiculous tomato garden she’d ever seen.

She knocked on the door. The woman who answered had taken library seeds three years ago, and they’d failed, and she’d been crossing and adjusting varieties in her backyard ever since. Never thought to bring any back. “I figured my weird clay dirt tomatoes wouldn’t be useful to anyone else,” she said.

But across town, there are six other houses with clay soil. And they’re all still struggling.

“That’s the thing,” the librarian told me. “The gardens have never been better. But the gardeners don’t know about each other. Nobody’s connected because there’s no reason to walk through my door anymore.”

“What I really need,” she said, “is a way to see all the gardens at once. What changed and where. Just so we can see what’s out there.”

That thing doesn’t exist yet. But she put the map up anyway.

So, okay. In the spirit of all of this, here’s my fork of codeimage. The one I mentioned earlier. That I’ve been sitting on for months because I never submitted a PR and never really planned to.

I’d been holding off on even putting it up publicly because.. well… what’s the point? There’s no ForkHub. No SourceFork. No ForkLab. No place designed for saying “hey, here’s what I changed and why” without the overhead of “please, sir, may I merge.” No platform where a maintainer can browse the constellation of forks and see what people actually needed their software to do differently.

But the librarian put her map up anyway, even though most of it was empty. So I’m putting mine up too. A pin on a map that doesn’t exist yet. A little flag that says: I needed something different, here’s what I did about it.

It’s slop. But it’s slop that works for me. And maybe someone sees it. Maybe they don’t. Maybe the map fills in over time. Maybe someone builds the map.

The seed library metaphor is the best framing I've seen for this. Especially the ending — the librarian can see the gardens are better but can't see what's in them.

I've been running into a version of this at the practice level, not code. When you build a governed AI workflow — decision logs, cross-project handoffs, standing policies — the same fork-and-customize dynamic kicks in. Everyone builds their own version. The gardens are thriving. But nobody can see what's working across them.

Your librarian's corkboard is the right instinct. I keep wondering whether the missing piece isn't a platform for sharing artifacts but one for making the patterns legible — what changed, why, and what it revealed.

Here's to the map! Also, I like the story and interleaved format, very nice metaphor and compliments the point.